行列をreshapeしてテンソルにすることあるじゃないですか.いろいろな reshape が定義できますが,ビジョン系の研究するときは,Ket Augumentation という reshape をすることがよくあるっぽいです.

この論文の第五章を読んで,KA実装しました.

https://dl.acm.org/doi/abs/10.1109/TIP.2017.2672439

入力行列は 2depth × 2depth として,これを 4×4×...×4 のテンソルにreshape します. (depth は自然数)

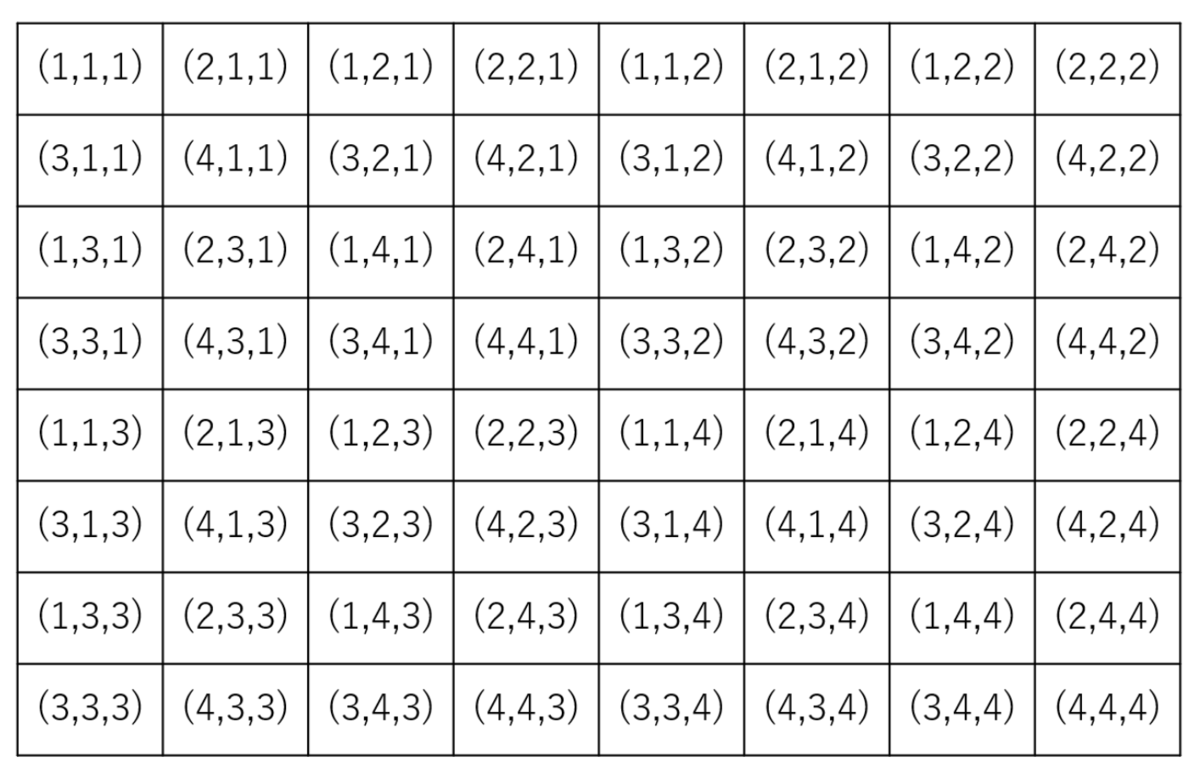

例えば,depth が 3,つまり 8×8 の行列 を 4×4×4 に reshape する例を考えます.図のような感じで8×8の入力 (i, j) の各要素がreshape 後のテンソルのどの要素になっているかを表した表が以下です.

例: 入力行列の(3,3)成分は reshape して得たテンソルの (1,4,1) 成分. 入力行列の(5,3)成分は reshape して得たテンソルの (1,2,3) 成分.

Julia 実装はこんな感じ.

function get_Fn(depth) Fp = [1 2 ; 3 4] Fn = 0 for n = 1 : depth-1 Fn_1 = Fp Fn_2 = Fn_1 .+ 4^n Fn_3 = Fn_2 .+ 4^n Fn_4 = Fn_3 .+ 4^n Fn = [Fn_1 Fn_2; Fn_3 Fn_4] Fp = Fn end return Fn end function get_Tn(depth) tensor_size = 4 * ones(Int,depth) Tn = zeros(Int, tensor_size...) c = 1 for idx in CartesianIndices(Tn) Tn[idx] = c c += 1 end return Tn end # InvFn[ Fn[i,j] ] = (i,j) function get_InvFn(depth) InvFn = Array{CartesianIndex}(undef, 4^depth); for i = 1:2^depth for j = 1:2^depth c = Fn[i,j] InvFn[c] = CartesianIndex((i,j)) end end return InvFn end #InvTn[ Tn[i,j,k,l]] = (i,j,k,l) function get_InvTn(depth) InvTn = Array{CartesianIndex}(undef, 4^depth); for idx in CartesianIndices(Tn) c = Tn[idx] InvTn[c] = idx end return InvTn end # tensor == reshape_to_tensor(reshape_to_mtx(tensor,InvTn, Fn),Tn,InvFn) function reshape_to_tensor(mtx, Tn, InvFn) depth = Int(log(2,size(mtx)[1])) high_order_tensor = zeros(4*ones(Int,depth)...); for idx in CartesianIndices(high_order_tensor) m = Tn[idx] n = InvFn[m] high_order_tensor[idx] = mtx[n] end return high_order_tensor end # mtx == reshape_to_mtx(reshape_to_tensor(mtx,Tn,InvFn), InvTn, Fn) function reshape_to_mtx(tensor, InvTn, Fn) depth = ndims(tensor) mtx = zeros(2^depth, 2^depth) for i = 1:2^depth for j = 1:2^depth m = Fn[i,j] idx = InvTn[m] mtx[i,j] = tensor[idx] end end return mtx end

適当な行列をつくって,reshape してみます

depth = 3 mtx = rand(1:9,2^depth, 2^depth) # 8×8 Matrix{Int64}: # 8 2 4 2 5 6 6 2 # 3 7 2 9 2 5 8 1 # 3 9 9 6 4 5 3 4 # 1 1 9 7 9 8 7 2 # 6 1 6 7 9 7 1 4 # 4 5 4 9 3 4 5 8 # 3 4 8 8 1 3 8 4 # 5 7 8 5 6 1 6 5 Fn = get_Fn(depth); Tn = get_Tn(depth); InvFn = get_InvFn(depth); InvTn = get_InvTn(depth); high_order_tensor = reshape_to_tensor(mtx, Tn, InvFn) #4×4×4 Array{Float64, 3}: #[:, :, 1] = # 8.0 4.0 3.0 9.0 # 2.0 2.0 9.0 6.0 # 3.0 2.0 1.0 9.0 # 7.0 9.0 1.0 7.0 #[:, :, 2] = # 5.0 6.0 4.0 3.0 # 6.0 2.0 5.0 4.0 # 2.0 8.0 9.0 7.0 # 5.0 1.0 8.0 2.0 #[:, :, 3] = # 6.0 6.0 3.0 8.0 # 1.0 7.0 4.0 8.0 # 4.0 4.0 5.0 8.0 # 5.0 9.0 7.0 5.0 #[:, :, 4] = # 9.0 1.0 1.0 8.0 # 7.0 4.0 3.0 4.0 # 3.0 5.0 6.0 6.0 # 4.0 8.0 1.0 5.0

あってそうか,いくつかの要素をみてみる.

high_order_tensor[1,1,1] == mtx[1,1] # true high_order_tensor[4,1,1] == mtx[2,2] # true high_order_tensor[1,4,1] == mtx[3,3] # true high_order_tensor[1,4,1] == mtx[3,3] # true high_order_tensor[3,2,3] == mtx[6,3] # true

大丈夫そう!

テンソルを reshape 前の行列に戻したかったら,

reshape_to_mtx(high_order_tensor, InvTn, Fn)

とすれば ok.